Impact Factor : 0.548

- NLM ID: 101723284

- OCoLC: 999826537

- LCCN: 2017202541

Michele N Minuto*1, Gianluca Marcocci1,Domenico Soriero1,Gregorio Santori1,Marco Sguanci1,Francesca Mandolfino1,Marco Casaccia1,Rosario Fornaro1,Cesare Stabilini1,Gianni Vercelli2,Simone Marcutti2,Marco Gaudina2,Francesca Stratta1 and Marco Frascio1

Received: May 29, 2018; Published: June 11, 2018

*Corresponding author: Michele N Minuto, MD PhD, Dipartimento di Scienze Chirurgiche (DISC), Università degli Studi di Genova, Via L.B. Alberti 6, 16132, Genoa, Italy

DOI: 10.26717/BJSTR.2018.05.001190

Laparoscopic surgery is the standard approach for most surgical operations because of its benefits for the patients, although it requires a significant learning curve. For this reason, the FDA established the need for certified laparoscopic training programs, supported by validated surgical simulators. Our multidisciplinary team developed a virtual surgical simulator (eLap4D) based on: a low-cost and a realistic haptic feedback. This study presents the validation process of the eLap4D, performed through the construct and face validities.

The authors preliminarily analyzed and excluded the possible impact of videogame experience on eLap4D users. The construct validity was used to objectively assess the surgical value of five basic skills by comparing the performances between two groups with different levels of laparoscopy experience. The presence of a learning curve was also evaluated by comparing the results of the first and second attempts. The difference among exercises was investigated in terms of the difficulty and kind of basic gestures, comparing the completion rates of every task in the three difficulty levels each. Face validation was performed using a specific questionnaire investigating the realism and accuracy of the simulator. This last survey was administered only to experienced surgeons. The validation process indicated that eLap4D can measure surgical ability and not just videogame experience. It also positively affects the learning curve and reproduces different basic gestures and levels of difficulty. Face validity confirmed that its structural features and ergonomics are satisfactory. In conclusion, eLap4D seems suitable and useful for learning basic laparoscopy skills.

Keywords: Surgical Simulator, Virtual Reality, Training, Haptic Feedback, Validation, Face Validity, Construct Validity

Abbrevations: EUSTM: European Society for Translational Medicine, TM : Translational Medicine, TS : Translational Science, SBME: Simulation- Based Medical Education, FDA: Food & Drug Administration, FLS: Fundamentals of Laparoscopic Surgery, HEC: Hand-eye coordination

The European Society for Translational Medicine (EUSTM) defines Translational Medicine (TM) as an interdisciplinary branch of the biomedical field supported by three main pillars: benchside, bedside and community”. The aim of TM is to enhance prevention, diagnosis, and therapy [1]. Translation describes “translating” laboratory results into potential health benefits for patients. Research on medical education contributes to translational science (TS) because its results enrich educational settings and improve patient care practices. Simulation-Based Medical Education (SBME) has demonstrated its role in achieving such results [2]. In April 2004, the Food & Drug Administration (FDA) became involved in the discussion about the didactics of young surgeons, demanding the development of a learning program based on simulators that was primarily tested and validated by industry experts. The first FDA mandate inherent in surgical training has definitively sanctioned the start of the “Simulation era” [3,4]. At present, even with respect to ethical and social implications, surgery requires learning in a simulated and safe area before operating on the patient [5,6]. For all these reasons, the Accreditation Council for graduate medical education has stipulated that all accredited structures for surgical instruction must include simulation [7].

In 2016, Kurashima revised the literature on how simulation is integrated into training programs. Many centers are equipped with simulation areas, but there is still significant disagreement about how to develop training programs. He concludes that efforts are needed to standardize these training paths [8]. North America is trying to do this. In fact, standardized training courses (for example FLS - Fundamentals of Laparoscopic Surgery) are needed to acquire “American Board of Surgery” certification [7]. The new Italian medical specialization teaching system was approved in 2017, although there was no reference to the integration of simulation into training programs. Despite this gap, many surgeons are aware that proper simulation training is of mainstream importance to the education of young surgeons [9-14], so dedicated programs are being set up, but only in selected schools. These programs can be supported by following two main paths:

I. Using devices that are already on the market (the more expensive option) and

II. Undertaking research projects aiming to develop custom- built surgical simulators.

Two main simulator models are currently available: physical and virtual platforms. Physical simulators (box trainers) were the first typology to be introduced. They are cheap, and the haptic feedback is authentic. They reproduce basic gestures but do not allow entire surgical procedures or intraoperative complications to be reproduced. These restrictions have been overcome by the introduction of virtual platforms that allow more complex and realistic surgical scenarios, in addition to basic skills, to be simulated. Several studies have demonstrated the effectiveness of virtual platforms with respect to surgical training (i.e., evidence of the reproduction of a learning curve) [15-17], but their high costs and recurring unreliable haptic feedback do not permit their worldwide diffusion in universities or in the departments involved in teaching programs. Haptic feedback must be a key feature of a virtual simulator because its realism is essential for correcting learning laparoscopic techniques correctly [18-20].

Nevertheless, it is often the most neglected part of the system because of, for example, the lack of a mathematical algorithm that can calibrate the real feedback force during the interaction with virtual organs and tissue [21]. Aiming to improve the surgical training program at the University of Genoa, a team composed of general surgeons and engineers has developed a virtual surgical simulator (elap4D) focusing on two essential features: the lowest possible cost and haptic feedback that is as realistic as possible. To objectively assess the value and adequacy of a surgical simulator as a laparoscopic training platform, as already done for other simulators [22-27], eLap4D must undergo a validation process, as established by the FDA’s protocol [28].

The eLap4D system is composed of hardware and software components that interact to simulate the environment, its physical and visual rendering, and its haptic feedback.

a. The simulation system. The system is based on a server developed with a node.js application that allows interactions among the visual system, the different components of the hardware and the database containing the user data. The server technology works as a “gate” and allows the interactions among all the elements of the system (hardware or software). The user interacts with the system through an HTML web page by working with a 3D unity webplayer plugin, which is an operating system used for the graphic development of videogames or 3D animations. The use of a web page interface was chosen because of its rapid data exchange and because, in the future, it might permit quick interactions with another user or a supervisor (e.g., a tutor) [29].

b. The rendering system. The meshes were shaped using 3D Studio Max®, developed by Autodesk© (2016 Autodesk Inc) [30], and then imported in 3D Unity together with texture and UV maps. Finally, different visual effects (e.g., the shader effect) have been added to the meshes in 3D Unity to create the most realistic result possible. Physical modeling was developed using more dynamic parametric protocols to avoid system overloads.

c. The haptic feedback system. The key feature of the simulator is obtained through a potentiometer (a vibrating engine) managed by an Arduino electronic card and by three Phantom Omni® Haptic Devices (Sensable®).

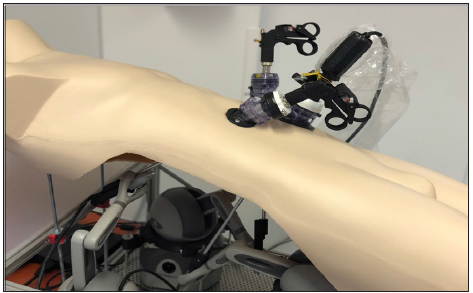

The Arduino card is essential for this mechanism; it limits the grip of a forceps when tissue is grabbed, and it allows the vibration of the tissues that are being manipulated or cut to be felt [31]. The three Phantom Omnis are directly connected to the handpieces of the surgical instruments and are used to simulate the resistance of the tissues while moving the instruments or pulling the different tissues and to render limitations from the surrounding structures (e.g., simulating collisions) [32]. During the training session, the ELap4D hardware interacts with the software component through instruments that are made using real laparoscopic surgery instruments (Figure 1). Every exercise is recorded, and a final evaluation is performed on the basis of their completion the time, core, and possible penalties encountered during the single exercise, thus allowing the slope of the learning curve to be registered and verified.

Figure 1: The eLap4D simulator is composed of a dummy torso: the hardware interacts with the software component through instruments that are made using real laparoscopic surgery instruments.

This procedure aimed to evaluate if eLap4D can be used as a training virtual platform in laparoscopic surgery. Among the five validities recognized (content, face, construct, concurrent and predictive), we employed the construct validity and face validity because they are the most commonly used in literature for analyzing integrated virtual-mechanic systems. The construct validity was evaluated by examining the results obtained by two groups (divided by their laparoscopic experience) in five tasks with three different levels each to investigate eLap4D’s aptitude in the following:

a. Measuring surgical ability: can eLap4D distinguish experts from trainees in laparoscopy?

b. Offering different types tasks and altering their complexity: can the current evaluation system stratify different degrees of complexity?

c. Having a positive impact on the learning curve: does user performance increase with time and experience?

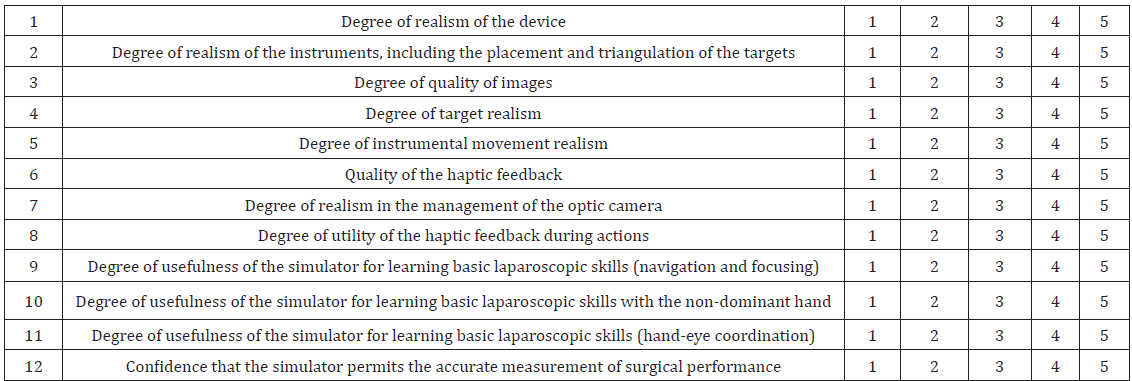

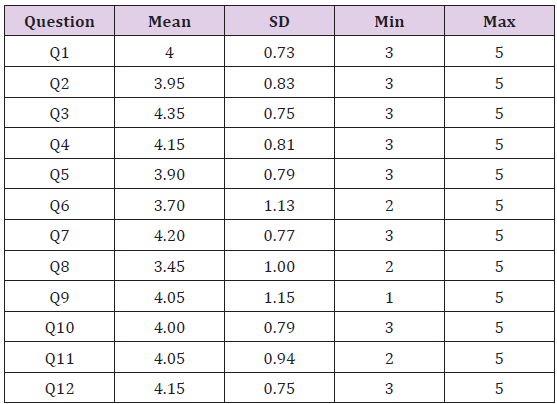

Face validity was assessed by surgeons experienced in laparoscopy with a questionnaire composed of 12 closed-ended questions about the realism of the following topics:

a. Hardware (ergonomics, structure, similarity with the real operating room, and of the surgical devices);

b. Software (haptic feedback, quality of the targets, and tasks);

c. A potential educational role in laparoscopic surgery.

For each question a score was assigned according to the “Likert” rating scale (1. Highly inadequate, 2. Insufficient, 3. Sufficient, 4. Good, 5. Very good) (Table 1) [33,34].

Table 1: Questionnaire administered to experienced surgeons in the face validation study of the simulator: for each question a score was assigned according to the “Likert” rating scale (1. Highly inadequate, 2. Insufficient, 3. Sufficient, 4. Good, 5. Very good).

For the purpose of the study, 40 individuals were recruited and divided into two categories:

a. Group STUD: 20 medical students without any experience in laparoscopic surgery; and

b. Group INT: 20 residents in general surgery with at least 10 laparoscopic operations performed as first surgeon in the last year.

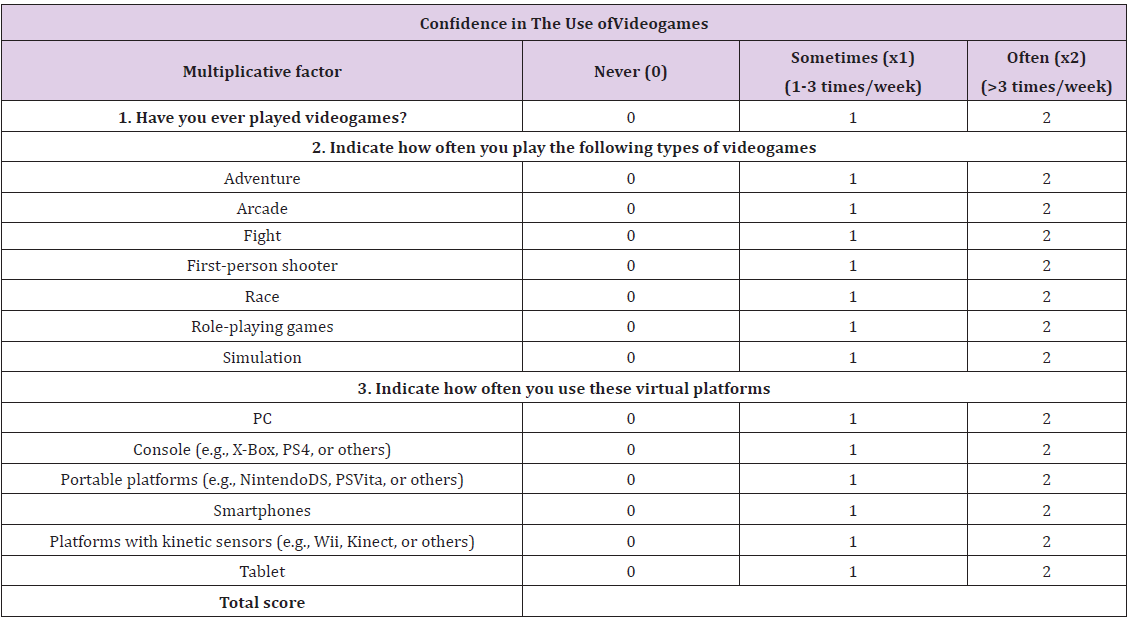

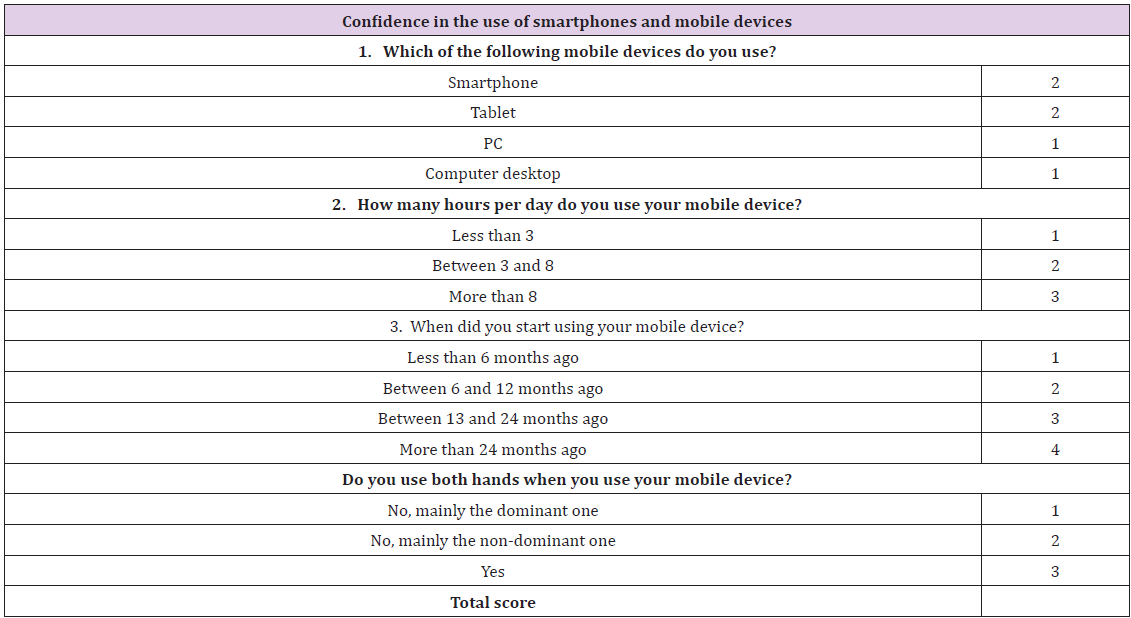

Before the beginning of the validation process, each participant answered an initial questionnaire about their personal level of confidence in the use of videogames, computers, smartphones, tablets, and other virtual platforms [35]. This questionnaire was able to give a score representing the degree of familiarity with electronic devices (VG score). This was done to verify whether the score obtained with eLap4D was somehow affected by user’s experience with these devices (the so-called “videogame effect”) (Tables 2a & 2b).

Table 2: a) and b)The twoquestionnaires were administered to each participant to score their confidence with technology devices (videogames, tablets, and smartphones). This score was calculated based on the kind of platform and on the frequency and type of use. The sum of all of items of the questionnaire is defined as the VG score.

a)

b)

Then, after an introduction to the machine and being dressed in a real surgical gown (to adhere to the reality of the operative room, figure 2), each participant performed 5 basic skills with 3 difficulty levels each 2 times, for a total of 30 exercises per subject. To guarantee the correct statistical analysis, a closed testing system where users had a limited number of attempts (two for every exercise at each level) was chosen because an open testing system could show a bias such as weakness, time delays or methodological limits. At the end of the entire cycle of exercises, the INT group was asked to complete a second questionnaire used for face validation.

For construct validation, we have selected 5 tasks that should be able to reproduce different laparoscopic basic gestures (basic skills), with 3 levels of difficulty for each one. The main categories are:

1. Laparoscopic focusing/navigation: The user, handling a laparoscopic camera with a 30° endoscopic camera with one hand, has to identify and focus, in a due time, on five targets that were placed randomly in a scenario composed of a wide field containing different virtual structures, avoiding collisions with surrounding structures, which would add penalties. When increasing the level of difficulty, the available time decreased, and the camera was blurred with a so-called “fog effect”. Two different exercises were developed:

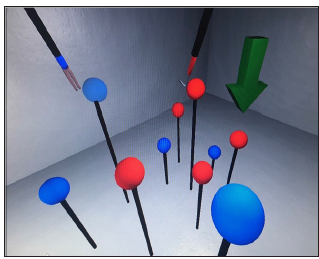

a. Task 1 evaluates macro-focusing ability, which does not require precision because of its wide space and low number of obstacles. In this exercise the user, handling a 30° endoscopic camera, needs to focus different solid targets in a static scenario (Figure 2). This task evaluates the macro – focusing and handling of an angled camera in a wide scenario.

Figure 2: Exercise 1, evaluating macro-focusing ability. The lighted objects, located in a wide space and with a low number of obstacles, should be identified and focused, using a 30° endoscopic camera. This task evaluates the macro-focusing and handling of the angled camera in a wide scenario.

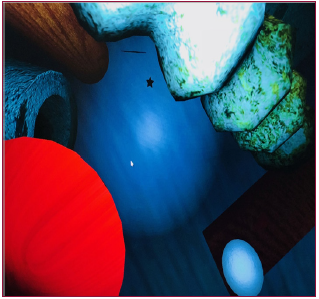

b. Task 2 evaluates micro-focusing ability, which requires more movement precision because of a narrow space and the presence of more obstacles in the scenario. The student working with a 30° camera, have to focus a lot of hidden micro- targets, placed in different areas of the scenario (Figure 3).

Figure 3: Exercise 2 evaluates micro-focusing ability: it requires more movement precision because of a narrow space and the presence of more obstacles in the scenario. The user, working with a 30° camera, have to focus several hidden micro- targets, placed in different areas of thescenario.

2. Hand-eye coordination (HEC): The user should handle the camera and another instrument using both hands. Three different exercises were developed:

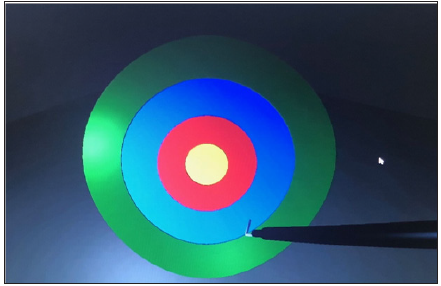

a. Task 3: The goal is to touch the center of a circular target with the instrument’s distal end. With increasing levels of difficulty, the available time decreases and the user has to achieve the target using the dominant hand (level 1), the opposite hand (level 2), and both hands simultaneously with blurred vision (level 3). A penalty is assigned when the target is overstepped. This task aims to train the precision, accuracy, simultaneity and unrefined coordination of bimanual gesture (Figure 4).

b. Task 4. The goal is to touch blue and red spheres located at different heights and depths with the distal part of two forceps (blue and red), respecting the “color instrument-sphere” code. In level 1, the sequence is totally arbitrary; in level 2, it alternates; and in the last level, it is established by the software (Figure 5). Penalties are assigned when the user does not respect these rules. It assesses the ability in depth perception and refined coordination of bimanual gesture.

Figure 4: Exercise 3: The goal is to touch the center of a circular target with the instrument’s distal end. With increasing levels of difficulty, the available time decreases and the user has to achieve the target using the dominant hand (level 1), the opposite hand (level 2), and both hands simultaneously with blurred vision (level 3). This task aims to train the precision, accuracy, simultaneity and unrefined coordination of bimanual gesture.

Figure 5: Exercise 4: the user should touch the blue and red spheres located at different heights and depths with the distal part of two forceps (blue and red), respecting the “color instrument-sphere” code. In level 1, the sequence is totally arbitrary; in level 2, it alternates; and in the last level, it is established by the software.

Figure 6: Exercise 5. The goal is to grab three cubes and place them in their respective boxes, using the forceps with the left hand and the camera with the right. It evaluates grasping, depth perception and bimanual coordination.

c. Task 5. The goal is to grab three cubes and place them in their respective baskets, using the forceps with the left hand and the camera with the right (Figure 6). When increasing the difficulty level, the time available decreases. Penalties are assigned when cubes are not placed in their own baskets. It evaluates grasping, depth perception and bimanual coordination.

For each exercise, different parameters were recorded:

a. Completion (percentage of the task performed within the established time)

b. Penalty (number of penalties for each task)

c. Total time (time that the user needs to accomplish the task)

d. Score (task’s score according to the number of targets achieved)

Statistical analysis of the first three parameters (continuous variables) was performed using the Wilcoxon-Mann-Whitney test, considering a p value <0.05 for statistical significance. The last parameter, a categorization variable, was analyzed with the Fisher exact test, assuming the same p value for statistical significance.

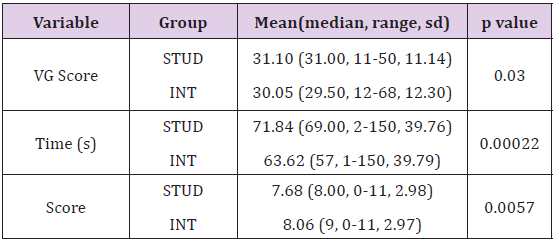

Our preliminary analysis aimed at verifying if the simulator was more similar to a common videogame or to a surgical simulator. This analysis was undertaken first by comparing the mean scores obtained by both groups in the questionnaire in terms of their confidence in the use of virtual platforms (VG score). In the STUD group, the mean VG score was 31.10 (median: 31, range: 11-50, sd: 11.14), whereas the INT group had a mean VG score of 30.05 (median: 29.50, range: 12-68, sd: 12.30). The Wilcoxon-Mann-Whitney test measured a statistically significant difference between the two groups (p=0.03) (Table 3). After this preliminary evaluation, the analysis focused on the results obtained by the two groups in the virtual exercises (the “construct validity”). Overall, in the STUD group, tasks were completed in a mean time of 71.84 seconds (median: 69, range: 2-150, sd: 39.76), with a mean score of 7.68 (median: 8, range: 0-11, sd: 2.98), a mean number of penalties of 0.81 (median: 0, range: 0-9, sd: 1.42), and a completion rate of 417 exercises (69.5%). The INT group had a mean time of completion time of 63.62 seconds (median: 57, range: 1-150, sd: 39.79), a mean score of 8.06 (median: 9, range: 0-11, sd: 2.97), a mean number of penalties of 0.77 (median: 0, range: 0-9, sd: 1.36), and a completion rate of 456 exercises (76%).

Table 3: Comparison between the groups in terms of score VG, time, and score with respect to all records (n=600 for each group).

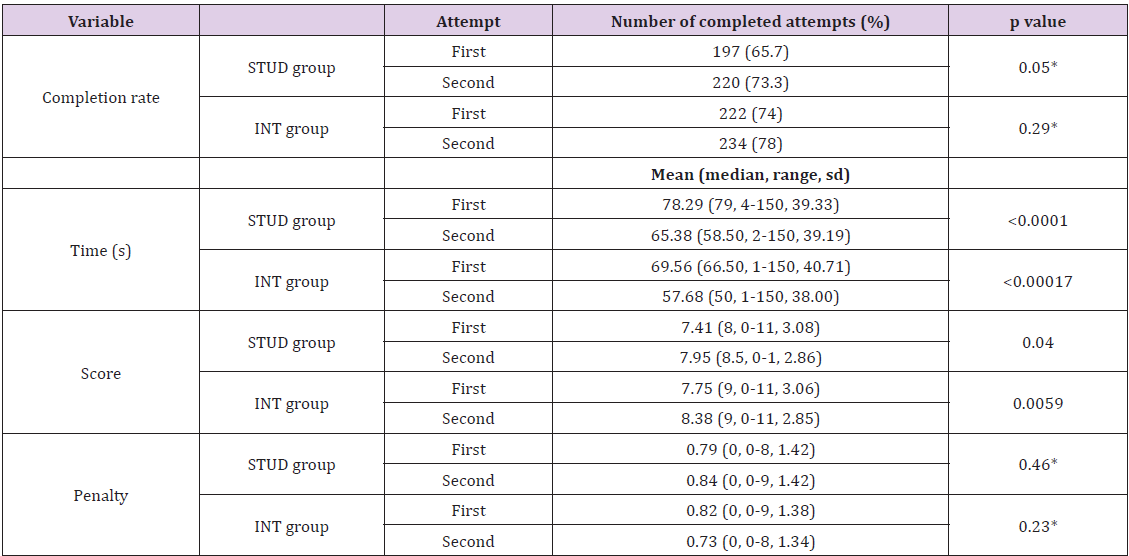

The statistical analysis revealed a statistically significant difference between the groups, always in favor of the surgeons (both p<0.01), as shown in table 3. Then, the results obtained within each group was examined: to allow a correct interpretation of data, n=600 refers to the full records (20 participants/group x 5 tasks x 3 levels of difficulty each x 2 attempts), and n=300 refers to the first or second attempt only. The mean time and mean score obtained in the first and second attempts by the two groups are summarized in tables 4 and 5, respectively. These raw results confirm that the significant difference measured between the groups is maintained in all parameters except the final scores after the first attempt. The results obtained for the first and second attempts of each group were then compared, with the aim of evaluating whether there was a significant improvement in performance that could represent the start of a learning curve. In the STUD group, the five tasks were completed 197 out of 300 times on the first attempt (65.7%) and 220/300 on the second (73.3%).

This difference, although evident, did not reach statistical significance (p=0.05). However, a statistically significant improvement was recorded for the completion time (p<0.0001) and the score (p=0.04), although the penalties did not significantly improve (p=0.46). The same analyses were carried out for the INT group. The results showed that the completion rate of the exercises improved between the first and second attempts, but this difference did not reach statistical significance (p=0.29). However, a statistically significant improvement was recorded for the time (p=0.00017) and the score (p=0.0059), and the penalties decreased but not significantly (p=0.23). These results are summarized in table 6. Furthermore, to evaluate if different difficulty levels could correctly stratify and underline an improvement in technical abilities, the results obtained by the two groups at the different levels were analyzed and compared. The results obtained show that the higher the level of difficulty was, the more the score decreased (p=0.001) and the more the penalties raised (p<0.0001), as showed in table 7.

Table 6: Comparison between the first and second attempts by the STUD and INT groups (n=300 for each attempt)

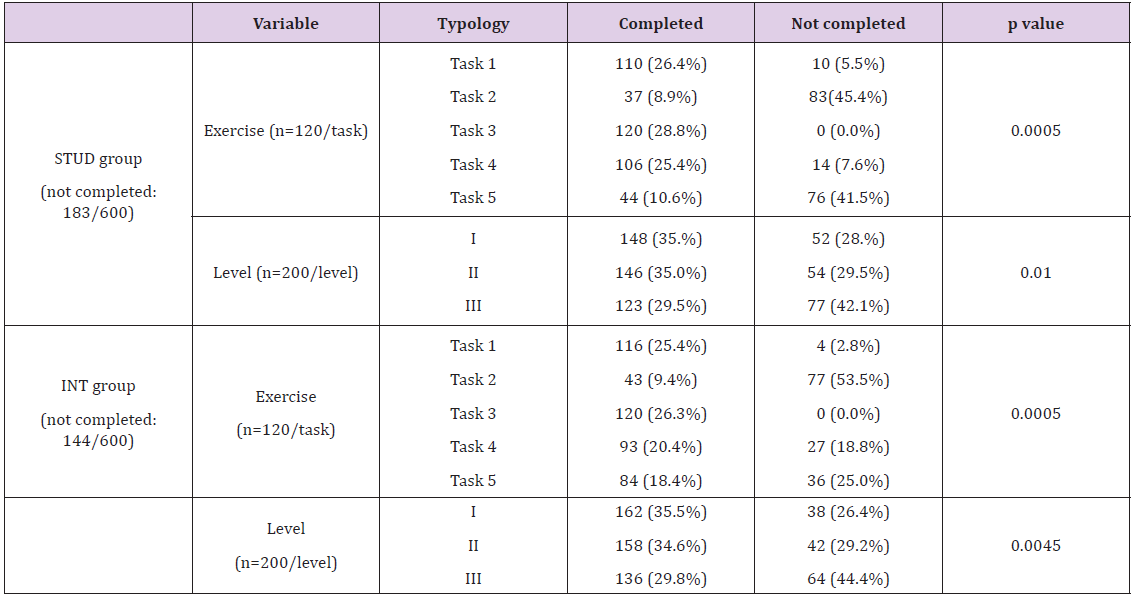

Finally, the completion rate of the exercises at each difficulty level (n=600 for each group) was significantly lower with increasing difficulty levels (p<0.01 for both groups), as shown in table 8. For further speculation about the value of single tasks, the same table also shows the completion rate of the 5 exercises.

Table 8: Completion rate of each single task at three difficulty levels by the STUD and INT groups (n=600 for each group).

The second part of the validation process focused on the INT group’s assessment of the realism of the software, the hardware and the potential educational role of eLap4D in laparoscopic surgery. The questionnaire results are shown in table 9.

Table 9: Face validation. Overall mean score of the face validation questionnaire, which was a Likert scale (1. Highly inadequate, 2. Insufficient, 3. Sufficient, 4. Good, 5. Very good).

Over the past several decades, surgical learning has been based on three different models: the traditional triad “see one, do one, teach one”, the cadaveric model and the animal one. According to the first method, the young surgeon needs to initially learn from the more experienced colleague’s surgical movements, reproduce the same gestures under a gradually decreasing level of supervision, and then teaches them to less experienced trainees [36]. The cadaveric and animal models are focused mainly on the acquisition of gestures repetitively performed on human cadavers (in a real, although bloodless, surgical field) and/or live animals (in a surgical field that is similar to that of a human, but with a real hemorrhagic risk). All of these learning strategies are associated with ethical, legal and economic issues. Over the last decade, the simultaneous upgrade of both technology and surgery has allowed the development of surgical simulators, focused mainly on training the laparoscopic branch of surgery. Simulators allow the basic surgical techniques to be learned in a lab before being transferred to a patient in the operating room. Several studies have demonstrated the effectiveness of these platforms on surgical learning, such as through the reproduction of a learning curve [37-39], to the point that the FDA has established the need for surgical learning programs based on tested and validated simulators [4].

In this new setting, the first application of this model has been the introduction of FLS (Fundamentals of Laparoscopic Surgery) certification, which is now required to be certified by the American Board of Surgery [7]. With the aim of improving the training experience of young surgeons affiliated with the surgical programs of the University of Genoa, a team composed of surgeons and engineers has designed and developed a virtual surgical simulator (eLap4D) that focuses on two essential features: the lowest possible cost and a realistic haptic feedback. Today, eLap4D is able to reproduce five basic skills at three different levels of difficulty. To assess its value in surgical training, it underwent a validation process, as established by FDA protocols. For this purpose, we tested two groups of subjects: those with laparoscopic surgery experience and those without any such experience. The first analysis evaluated the possible influence that videogame ability had on the surgery simulation reproduced by the eLap4D and, therefore, the possibility that eLap4D was closer to a videogame platform than to an instrument that can discriminate surgical abilities.

For this purpose, a dedicated questionnaire was administered to all participants, and its results showed that the group composed of subjects with no experience in surgery were more confident with videogames and other technological platforms than were the surgical experts. Nonetheless, our results also showed that this latter population obtained significantly better results in the “surgical” exercises. Based on these results, we could conclude that the potential effect that we called the “videogame effect” (the paradox that those who are more skilled in videogames would perform better than surgeons in surgical tasks) did not bias the software behind eLap4D and that the machine was able to evaluate different levels of surgical abilities. After this premise, the validation of eLap4D was based on both construct and face validity. The construct validity aimed to objectively evaluate the results obtained by the two groups on five basic skills. The hypothesis was to verify if the experienced subjects obtained better results in the exercises and demonstrate that the platform can discriminate and evaluate the users’ surgical abilities.

The overall comparison between the groups showed that both time and score were significantly better in the INT group for both attempts. With respect to the penalties, we observed that improvement is evident in the group composed of surgeons, although this was not statistically significant, but that the group composed of students had slightly worse results in their second attempts. This result probably reflects a realistic need for needing more attempts to reach a thorough performance of the surgical exercises and will be further elucidated by recording more performances of the exercises to verify the effective reproduction of a sound learning curve. Within the STUD group, we recorded a higher completion rate for the second attempt than for the first one (73.3% vs 65.7%, respectively), and a statistically significant improvement was recorded in the time and score variables. In contrast, the penalties (which contribute to the total score) increased, although not significantly. The analysis of the results obtained by the INT group produced results similar to the results of the STUD group. In the second attempt, surgeons had improved their completion rate, their penalties decreased, and the time and score variables improved.

However, only the last two parameters improved significantly, probably reflecting the fact that although the confidence with the instrument improved, the technical aspect of the gesture must still be improved. The improvements between the first and the second attempts recorded in both groups can represent the beginning of a learning curve, which is a typical feature of any manual activity. This improvement is usually progressive: very quick at first (in our analysis it is actually statistically significant), with progressive improvement until a plateau phase (in terms of results and execution time) is reached. The results obtained in this study are therefore likely those of a starting learning curve, although this speculation needs to be confirmed with more repetitions of the exercises and more subjects in both groups. The second part of the validation process focused on face validity. This was performed by analyzing the results obtained by the questionnaire given to the experts’ group only. The results underlined an overall positive degree of satisfaction, thus supporting the usefulness of eLap4D in laparoscopic teaching. Our results therefore seem to confirm that haptic feedback is one of the main key features of a realistic surgical simulator. In the current version of the simulator, this parameter is satisfactory, although still far from perfect: current efforts therefore aim to improving this feature, which is unanimously perceived as essential to the simulation of laparoscopic surgery.

The construct validity process highlighted that eLap4D can measure the surgical ability and is not influenced by a “videogame effect”, which would have led those who are skilled in videogames to achieve better scores in the surgical tasks than the expert surgeons. Moreover, the results obtained with our simulator indicated the start of a learning curve for both groups in all of the basic tasks that are currently available. Moreover, although both groups improved their results, the simulator maintained the capability to discriminate between the different surgical experience levels of the two groups. Finally, the face validity process has proved the high quality of the eLap4D’s hardware in terms of surgical scenario, devices, and ergonomics. It also indicated that the software can reproduce realistic laparoscopic basic gestures at different levels of difficulty, although the haptic feedback needs to be improved. On the basis of these results, eLap4D, although still in a development phase, is already used as a laparoscopic training platform in the General Surgery programs at the University of Genoa.